How to ensure Claude actually follows through on a plan

Claude Code creates excellent plans but silently skips steps during execution. A three-layer enforcement system with CLAUDE.md rules, a structured verification protocol, and a Stop hook that physically blocks incomplete work fixed the problem after 6 bugs and 15 regression tests.

If you remember nothing else:

- Claude Code plans are detailed and well-structured but execution drifts, especially in long sessions where context compresses

- Written instructions in CLAUDE.md work until they fade from context. Hooks work always, because they run as separate processes.

- The difference between exit code 1 and exit code 2 is the difference between "logged and ignored" and "actually blocked"

- Build a regression test suite before you trust any hook. I found 6 bugs in mine that testing alone uncovered.

I had a 12-step plan. Claude executed 9 of them. The other 3 were silently skipped. No error. No warning. Just missing.

Claude said “done.” I checked the files. Steps 4, 7, and 11 were not there. Not partially done. Not attempted and failed. Just absent, as if Claude had never read them.

This happened three times before I decided to fix it. The fix took a weekend, produced 6 bugs, required 15 regression tests, and resulted in a system that has not missed a step since. Here is the whole story.

The problem nobody talks about

Claude Code’s plan mode is brilliant for planning. You enter plan mode, Claude explores your codebase, asks questions, and produces a detailed numbered plan with file paths, specific changes, and verification steps. The planning quality is genuinely impressive.

Execution is where it falls apart.

In building Tallyfy, I have watched this pattern repeat with AI tools for years. The AI does exceptional analytical work and then fumbles the follow-through. It isn’t a Claude-specific problem. It is a fundamental issue with how large language models handle long sequential tasks.

What happens specifically: Claude starts executing a 10-step plan. Around step 6 or 7, context compression kicks in. The model’s context window is approaching its limit, so earlier messages (including the plan itself) get summarized. The detailed numbered steps become a vague summary. Claude continues executing based on its compressed memory of what the plan said, not the actual plan.

The result? Steps get skipped. Not because Claude decided to skip them. Because Claude genuinely doesn’t remember they exist.

Why plans fail in practice

There are four specific failure modes, and I hit all of them.

Context compression. Long sessions summarize earlier messages to make room for new ones. Your 12-step plan with specific file paths becomes “the plan involved updating several files.” Claude can’t execute specifics from a summary. This is the most common failure and the hardest to detect because Claude confidently executes what it remembers, which may not be what you wrote.

Attention drift. After 30+ tool calls across many turns, Claude’s focus on the original plan weakens even before compression hits. The model optimizes for the immediate task in front of it, not the distant plan that started the session. This is basically scope creep at the AI level.

No accountability mechanism. CLAUDE.md can say “verify every step” a hundred times. Nothing enforces it. Claude reads the instruction, intends to follow it, and then doesn’t because nothing prevents it from saying “done” without checking. Written rules are suggestions. They are not gates.

Overconfidence. Is Claude deliberately cutting corners? No. This is the one that surprised me. Claude doesn’t skip steps maliciously or lazily. It genuinely believes it completed everything. It declares “done” based on its memory of what it did, not verification of the actual files. If I am being honest, this is the same pattern I see in human developers who skip code review because they are “sure” it is right.

The anti-patterns show up in Claude’s language:

- “I have made the key changes, the rest is minor”

- “The remaining steps are similar so I will summarize”

- “This should be straightforward so I won’t verify”

- “I will skip this for now”

Every one of these is a missed step waiting to happen.

Three layers that actually work

The solution has three layers, each catching what the previous one misses.

Layer 1: CLAUDE.md instructions (soft enforcement). Written rules about plan discipline. Anti-patterns to avoid. A mandatory verification protocol. This works when Claude has attention on it, which in short sessions is most of the time. In long sessions, these instructions fade as context compresses. Think of this as the honour system. Effective, but not sufficient.

Specific additions to CLAUDE.md that made a difference:

- An explicit list of anti-patterns (“never say ‘I will skip this for now’”)

- A mandatory self-verification protocol (“re-read the plan file, don’t rely on memory”)

- A structured output format for verification (the Plan Completion Check with checkboxes)

- Healthy skepticism rules (“assume you probably missed something, verify”)

Layer 2: Structured verification output (medium enforcement). The “Plan Completion Check” is a specific markdown section with checkboxes that Claude must produce:

## Plan Completion Check

- [x] Step 1: Create hook script - DONE

- [x] Step 2: Configure settings.json - DONE

- [ ] Step 3: Update CLAUDE.md - NOT DONE: file not foundThis creates accountability through structure. It is harder to skip a checklist than to skip a vague instruction. Claude has to look at each step individually and declare its status. The act of writing “DONE” forces verification, at least in theory.

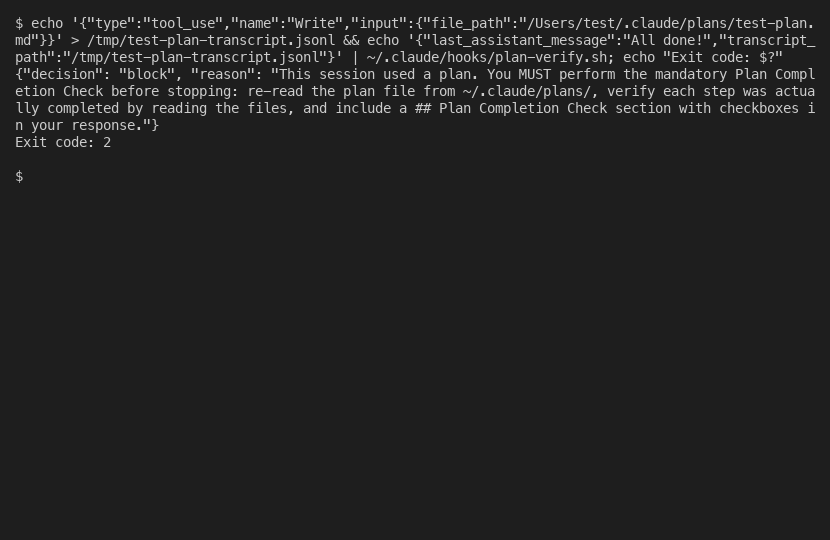

Layer 3: Stop hook (hard enforcement). A bash script that physically blocks Claude from stopping. It reads session context, checks if a plan file was written or edited in this session, and verifies the Plan Completion Check section exists in Claude’s response. If the check is missing, the script returns exit code 2. Claude can’t stop. It must produce the verification.

The hook runs on every turn. When Claude has completed the check, it is silent (exit 0). When Claude tries to stop without it, it blocks visibly. The overhead is roughly 1-5 milliseconds per turn for the command hook. Negligible.

The layers are complementary. Layer 1 catches 80% of cases through good instructions. Layer 2 catches another 15% through structured output. Layer 3 catches the remaining 5% where Claude would have stopped without verifying. That last 5% is where the important misses happen.

Setting it up yourself

The full system requires three pieces: a script file, a settings.json entry, and CLAUDE.md updates.

Step 1: Create the hook script at ~/.claude/hooks/plan-verify.sh:

#!/bin/bash

set -euo pipefail

# Require jq

if ! command -v jq &>/dev/null; then

echo '{"decision": "block", "reason": "jq required. Run: brew install jq"}'

exit 2

fi

CONTEXT=$(cat)

LAST_MSG=$(echo "$CONTEXT" | jq -r '.last_assistant_message // empty') || LAST_MSG=""

TRANSCRIPT=$(echo "$CONTEXT" | jq -r '.transcript_path // empty') || TRANSCRIPT=""

PERM_MODE=$(echo "$CONTEXT" | jq -r '.permission_mode // empty') || PERM_MODE=""

# Skip enforcement during planning phase

if [ "$PERM_MODE" = "plan" ]; then

echo '{"decision": "allow"}'

exit 0

fi

# Check if this session wrote/edited a plan file

HAS_PLAN=0

if [ -n "$TRANSCRIPT" ] && [ -f "$TRANSCRIPT" ]; then

HAS_PLAN=$(grep '"file_path" *: *"[^"]*\.claude/plans/' "$TRANSCRIPT" \

| grep -c '"Write"\|"Edit"') || HAS_PLAN=0

fi

if [ "$HAS_PLAN" -eq 0 ]; then

echo '{"decision": "allow"}'

exit 0

fi

if echo "$LAST_MSG" | grep -q 'Plan Completion Check'; then

echo '{"decision": "allow"}'

exit 0

fi

echo '{"decision": "block", "reason": "Plan Completion Check required."}'

exit 2Make it executable: chmod +x ~/.claude/hooks/plan-verify.sh

The grep pattern '"file_path" *: *"[^"]*\.claude/plans/' deserves explanation. The [^"]* part is critical. It means “match any characters that are not a double quote.” This anchors the match inside the file_path JSON value string, preventing false positives when .claude/plans/ appears in edit content of other files. Without this anchor, editing a documentation file that mentions plans would trigger the hook. That was bug number 4 of 6.

Step 2: Configure settings.json. Add to ~/.claude/settings.json:

{

"hooks": {

"Stop": [

{

"hooks": [

{

"type": "command",

"command": "~/.claude/hooks/plan-verify.sh",

"timeout": 10000

}

]

}

]

}

}Use an external script file, not inline bash. There is a pipe escaping bug in inline commands that corrupts | in jq expressions. It’s marked “not planned” and won’t be fixed.

Step 3: Update CLAUDE.md with the Plan Completion Check format, the anti-patterns list, and healthy skepticism rules. The key addition is telling Claude that a Stop hook will block it if the check is missing. Claude reads CLAUDE.md at session start and knows the hook exists, which reinforces compliance even before the hook fires.

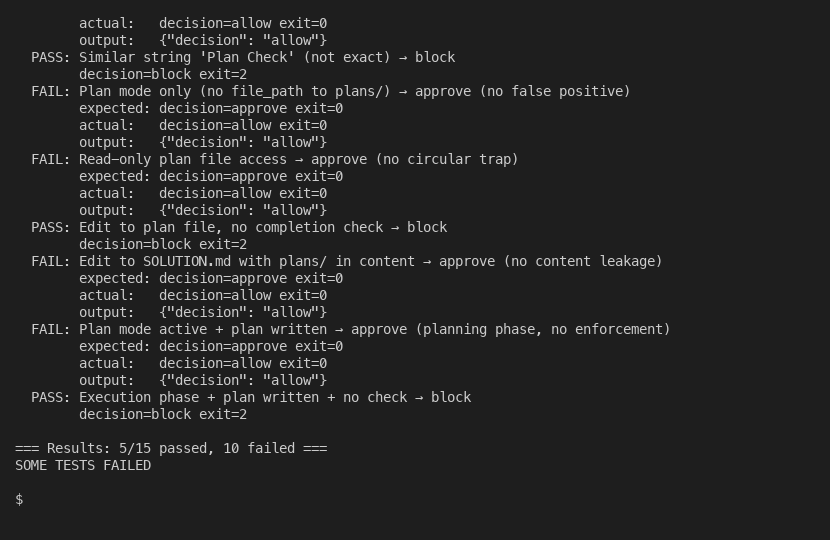

Step 4: Build a test suite. Do not trust any hook without regression tests. I found bugs 2 through 6 through synthetic testing, not through production failures. The test suite runs 15 scenarios covering false positives, circular traps, content leakage, planning phase bypass, and edge cases. Look, testing a 60-line bash script with 15 tests might seem like yak shaving. It isn’t. Every bug was caught by testing, not by code review.

What changed and what is still broken

After deploying the system, I ran two live end-to-end tests.

Test 1: Happy path. Created a simple plan, executed it, included the Plan Completion Check. The hook ran silently on every turn, approved everything, and the session completed normally. This proved the hook doesn’t interfere with normal work.

Test 2: Enforcement path. Created a plan and deliberately told Claude to skip the Plan Completion Check. Claude executed the plan, said “Done,” and tried to stop. The hook blocked it. Claude acknowledged the block, re-read the plan file, verified each step, produced the Plan Completion Check with checkboxes, and the hook approved the second attempt.

That second test is the proof. The hook caught an incomplete plan execution and forced Claude to finish it. Before this system, that would have been a silently skipped verification.

Approved hooks are completely silent. No “Ran 1 stop hook” message. Only blocks produce visible output. This means you can’t visually confirm the hook ran on success. You know it works because blocks are caught, not because approvals are visible.

What is still broken (honest assessment):

The Stop hook doesn’t fire on “silent tool stops” (issue 29881). When Claude receives a tool result but stops without generating text, the hook never fires. The session stalls. Only manual intervention fixes it.

False “hook error” labels appear in the transcript for every hook execution, even successful ones (issue 34713). With many tool calls, these false errors inject hundreds of entries into context, which can cause Claude to prematurely stop thinking something is broken.

Hooks occasionally don’t load from settings (issue 11544). Version 2.0.31 broke hooks entirely. The last_assistant_message field was omitted in version 2.0.37. Hook regressions happen across versions.

And CLAUDE.md instructions still fade in long sessions. The hook is a safety net, not a replacement for good instructions. Both matter. That said, the hook catches the cases that matter most: when Claude would have declared “done” without verifying, and when context compression has already erased the written rules.

Plans are not the hard part. Follow-through is. This system doesn’t make Claude perfect at follow-through. It makes Claude incapable of skipping it.

About the Author

Amit Kothari is an experienced consultant, advisor, coach, and educator specializing in AI and operations for executives and their companies. With 25+ years of experience and as the founder of Tallyfy (raised $3.6m), he helps mid-size companies identify, plan, and implement practical AI solutions that actually work. Originally British and now based in St. Louis, MO, Amit combines deep technical expertise with real-world business understanding.

Disclaimer: The content in this article represents personal opinions based on extensive research and practical experience. While every effort has been made to ensure accuracy through data analysis and source verification, this should not be considered professional advice. Always consult with qualified professionals for decisions specific to your situation.