Claude Code SOC 2 compliance - what your auditor needs to know

Your auditor does not care about Anthropic marketing promises or vendor certifications alone. They need evidence of YOUR controls around Claude Code, data handling documentation, and audit trails that prove your AI coding tool is not creating compliance gaps in your SOC 2 framework. IBM found 97% of AI-breached organizations lacked proper access controls.

If you remember nothing else:

- Anthropic's SOC 2 Type II certification does not replace YOUR controls. Auditors want your policies, your access logs, your vendor risk assessment.

- Claude Code subagents inherit tool access by default, creating a new access category your security policy must address explicitly

- Document data classification rules before code ever hits the API. Miss one piece of the chain and you have an audit finding.

Your auditor isn’t going to read Anthropic’s marketing pages.

They want your policies. Your access controls. Your audit logs. Your vendor risk assessment. Yes, Anthropic has SOC 2 Type II certification. Though calling it “certification” is technically incorrect. But it doesn’t replace the controls you need for Claude Code in a SOC 2 environment. Not even close.

What actually shows up in evidence requests? That’s what this is about.

The data flow question your auditor will ask first

Walk into any SOC 2 Type II audit with AI coding tools and the opening question is always the same: where does the data go?

With Claude Code, your developers’ code snippets, prompts, and context get sent to Anthropic’s servers for processing. That’s not inherently bad. But it triggers specific documentation requirements under the Trust Services Criteria that most companies miss entirely.

Your auditor expects to see data classification for code being processed, encryption in transit and at rest documentation, data retention policies from Anthropic, and your business associate agreement or data processing addendum. Miss any one of these and you’ve got a finding.

The good news: Anthropic commits to not training on your data for API and Enterprise customers, and publishes retention and data handling policies you can reference in your compliance documentation. The bad news: you still need to document how YOU enforce data classification policies before code ever hits their API. And I think this is where teams genuinely underestimate the work. Anthropic’s controls don’t substitute for yours.

Access controls that hold up under scrutiny

SOC 2 auditors evaluate least privilege access as a core security control. When you deploy Claude Code, someone needs to own the access policy. Not loosely own it. Actually own it.

That means documented answers to specific questions. Which employees can use Claude Code, and based on what criteria? What repositories or codebases can they reach through the tool? How does access get provisioned and deprovisioned when people change roles? Where does the audit trail of usage actually live?

Claude Code offers granular permission controls including read-only defaults and explicit approval requirements for sensitive operations. Useful. Does that solve your access control problem? No. Claude Code introduced subagents, specialized AI assistants that run in their own context windows, operating concurrently in the background. Each subagent inherits tool access from the main session by default, including any MCP protocol connections to external data sources.

That’s a new access category your policy needs to address. Which subagents can run? What tools can they reach? Who approved their permissions before launch? Write it down. Version control it. Review it quarterly.

Turns out, mid-size companies consistently get stuck here because access control documentation lives in someone’s head rather than in a formal policy. That doesn’t survive audit review.

Getting Claude Code through a SOC 2 audit requires controls most teams have never built before. I help mid-size companies design compliant AI policies that actually hold up under scrutiny.

Book a callThe vendor risk assessment nobody wants to do

SOC 2 frameworks require vendor risk assessments for any third party processing your data. Using Claude Code means you need a completed vendor risk questionnaire for Anthropic. Full stop.

Your auditor will ask for evidence you evaluated their financial stability, security certifications and compliance posture, incident response and breach notification procedures, data backup and disaster recovery capabilities, and contractual terms around liability and indemnification.

Dario Amodei’s Anthropic makes this easier by publishing compliance documentation including ISO 27001:2022 certification, ISO/IEC 42001:2023 for AI management systems, and HIPAA configurable options. But you still need to document that YOU reviewed these, that YOU assessed residual risk, and that YOU have an approved vendor in your tracking system.

The regulatory pressure is real and expanding. Key provisions of the EU AI Act are now in effect, with high-risk AI system requirements taking effect from August 2026 and penalties up to 7% of global revenue. The CCPA’s automated decision-making rules were finalized in 2025, with compliance required by January 1, 2026. Over 21 US states now have privacy laws in effect. Your vendor risk assessment for any AI coding tool needs to account for this expanding regulatory surface, not just SOC 2.

A practical vendor tiering approach helps here. Not all vendors carry equal compliance risk. Classify them by data sensitivity: does this vendor process customer PII? Does it have access to production systems? Does it store regulated data? Tier 1 vendors, those handling sensitive customer data or with production access, warrant full SOC 2 report review. Tier 2 vendors with lower risk profiles can be assessed through publicly available compliance documentation plus a formal review attestation. This proportional approach is more defensible than treating every SaaS subscription identically. Mind you, auditors appreciate the risk-based reasoning because it mirrors how their own materiality assessments work.

Template vendor assessment forms exist. Use one. File it. Reference it in your audit evidence. Honestly, this isn’t glamorous work, but it’s the kind that closes findings before they open.

Claude vs Copilot - key difference

Claude Code runs as a terminal-native tool that talks directly to Anthropic's API without routing through an intermediary backend server. Your vendor risk assessment covers one vendor. Thomas Dohmke's GitHub Copilot routes through GitHub's infrastructure, which means your assessment needs to cover both GitHub (Microsoft) and whichever model provider powers it. Neither approach is inherently better for SOC 2, but Claude Code's single-vendor data flow often simplifies evidence collection.

Why AI tools break standard change management

Traditional software has deterministic outputs. Same input, same output, same code review results. Predictable. Auditable.

AI models don’t work that way. Security research demonstrates that AI code review can be bypassed through prompt manipulation, and outputs shift with model versions and context windows. That creates a genuine, painful problem for SOC 2’s processing integrity criteria. Good luck explaining non-deterministic outputs to your auditor. The AICPA now explicitly requires companies to demonstrate that AI systems regularly generate complete, valid, accurate, and permitted outputs, which is difficult when your tool’s results shift with each model update.

Claude Code defaults to Claude Sonnet 4.6. Your auditor needs to see how you validate AI-generated code before it reaches production, what testing protocols catch security vulnerabilities in AI suggestions, and how you track which model version was used for critical code changes.

Anthropic offers sandboxing features that isolate code execution and prevent unauthorized data access. Claude Code also introduced checkpoints, save points that let you roll back to any previous state. That’s genuinely useful for compliance. You can demonstrate exactly what changed and revert if something goes wrong. It won’t make AI outputs deterministic, but it gives you an auditable trail of state changes.

Document your code review process. Require human validation. Log which AI model version was active during code generation. Use checkpoints as your rollback evidence.

The audit trail that actually matters

Your auditor wants logs. Not clunky marketing claims about logging capabilities. Actual, queryable, timestamped logs of who did what with Claude Code.

Minimum requirements: user authentication events, data access by repository or codebase, code modifications or suggestions accepted, and security policy violations or approval overrides.

Anthropic provides audit logging for compliance through their Enterprise plan. But vendor-level logging doesn’t replace proper logging at YOUR level. You need evidence that someone reviews these logs, that anomalies get investigated, and that access violations trigger your incident response process.

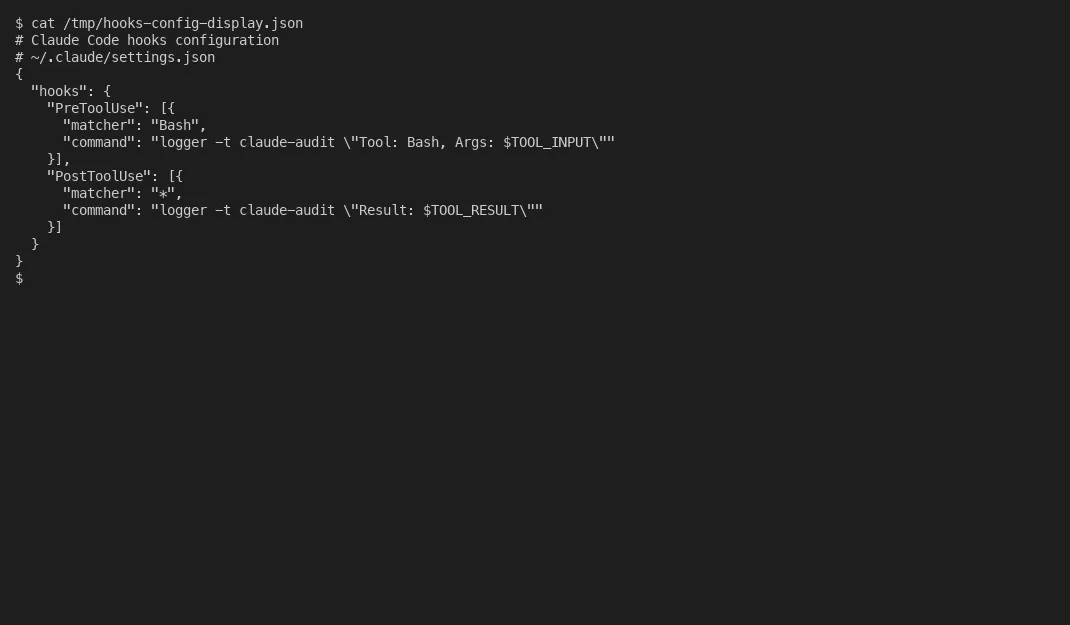

Claude Code added a hooks system that runs custom scripts at specific lifecycle points: pre-tool, post-tool, pre-commit. This is probably one of the most useful compliance features I’ve seen in an AI coding tool. You can wire up automated checks that run before any code modification, log every tool invocation with timestamps, and block commits that don’t meet your security policies. These hooks generate the kind of granular, machine-readable evidence that auditors actually want. When controls, risks, and evidence items live in structured data files rather than platform databases or spreadsheets, AI can parse them directly. It can calculate what is overdue, identify coverage gaps where controls lack supporting evidence, cross-reference risk items against mitigating controls, and generate status reports without manual effort. Machine-readable tracking makes AI-assisted compliance verification possible in a way that PDF-based or spreadsheet-based evidence management never could. The real value of audit trails is not just having them. It is being able to query them programmatically and surface problems before auditors do.

Set up automated alerts for high-risk events straightaway. Document who receives alerts and how quickly they respond. Keep logs for the duration your compliance framework requires, typically minimum 90 days for SOC 2 Type II observation periods, often longer for regulated industries.

Your security team probably already has a SIEM or log aggregation platform. Feed Claude Code audit logs into it. IBM’s 2025 report found that 97% of organizations that experienced AI-related breaches lacked proper AI access controls. Don’t be in that group.

SOC 2 compliance for AI coding tools basically comes down to the same fundamentals as any third-party system: document your controls, prove they work consistently, and maintain evidence that you enforce them.

The governance gap is wide. relatively few organizations have an established AI governance framework, and 63% of organizations either lacked an AI governance policy or were still developing one. ISACA’s analysis of 2025 incidents concluded that the biggest AI failures were organizational, not technical: weak controls, unclear ownership, misplaced trust.

Claude Code provides the technical capabilities. You own the policies, the documentation, and the evidence your auditor needs to close findings. We’ve documented how we replaced our compliance platform entirely using this approach.

Map the data flow. Build out access controls and logging. Run a vendor risk assessment. Actually, that makes it sound easier than it is. The basics haven’t changed. Just the tools have.

About the Author

Amit Kothari is an experienced consultant, advisor, coach, and educator specializing in AI and operations for executives and their companies. With 25+ years of experience and as the founder of Tallyfy (raised $3.6m), he helps mid-size companies identify, plan, and implement practical AI solutions that actually work. Originally British and now based in St. Louis, MO, Amit combines deep technical expertise with real-world business understanding.

Disclaimer: The content in this article represents personal opinions based on extensive research and practical experience. While every effort has been made to ensure accuracy through data analysis and source verification, this should not be considered professional advice. Always consult with qualified professionals for decisions specific to your situation.